The "Glass Box" KPI: Engineering Trust in Enterprise AI

Published on 3/20/2024

As organizations move Large Language Models (LLMs) from drafting emails to driving core operations, the conversation has shifted. The question is no longer "What can this model do?" but "Can we measure the risk of it being wrong?"

In high-stakes domains like Supply Chain and Retail, a "black box" algorithm is a liability. If an LLM recommends a million-dollar inventory shift, "because the model said so" is not an acceptable answer for a CFO. Effective governance is no longer just about avoiding EU AI Act fines (up to 4% of global revenue); it is about decision confidence.

From a product lens, we must stop building black boxes and start architecting "glass boxes". But to do that, we need to move beyond vague "trust" and start tracking concrete governance metrics.

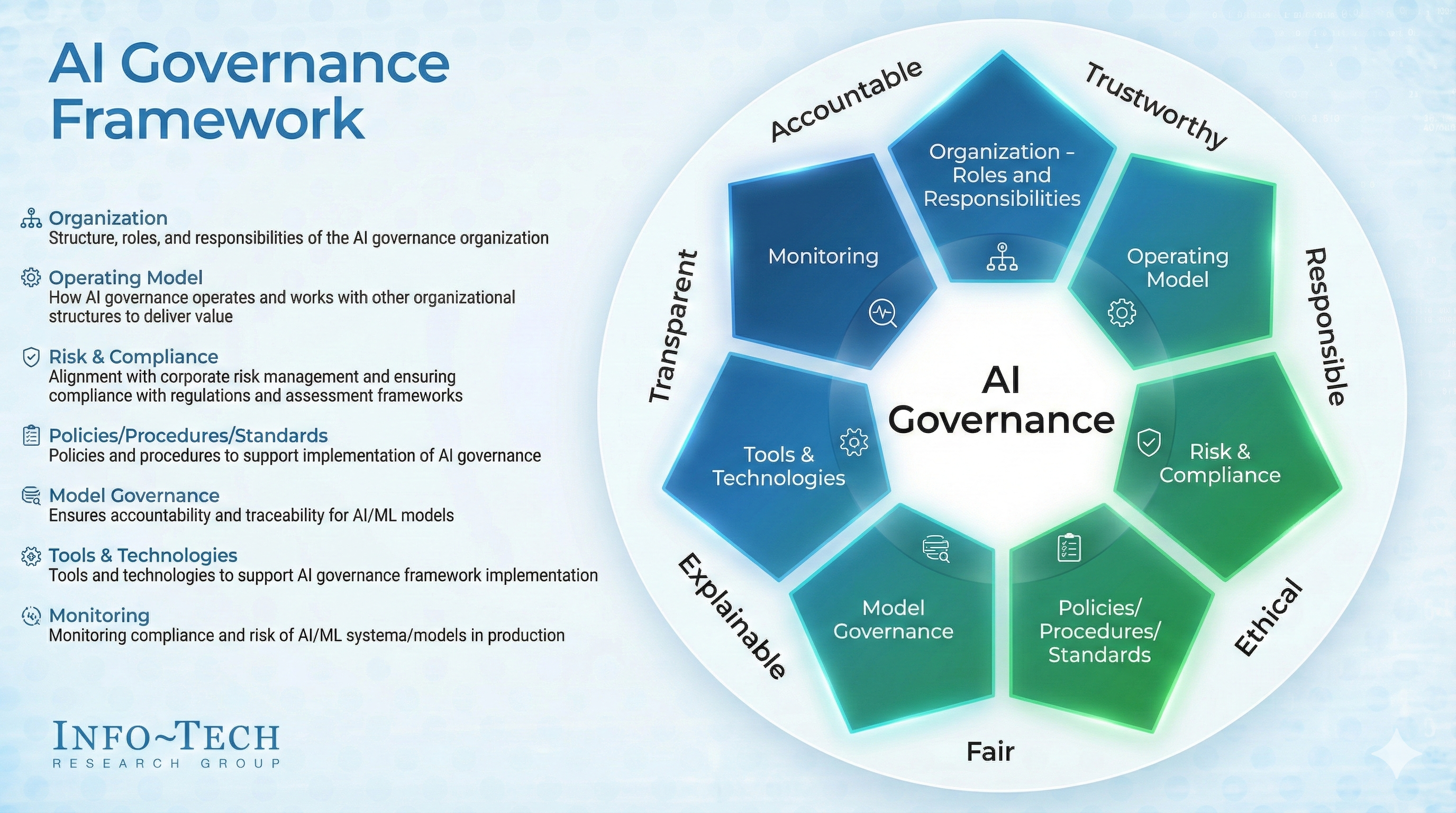

Fig 1: AI Governance Framework

Fig 1: AI Governance Framework

Here is the Product-Centric Governance Framework for turning interpretability into a competitive moat.

1. Quantifying the Risk: The Governance Maturity Score

You cannot manage what you do not measure. Product leaders should implement a composite Governance Maturity Score to gate deployments. A robust baseline formula prioritizes interpretability over raw performance:

Target: A score >0.8 before moving from pilot to production ensures that your ability to explain the model scales with the model’s complexity.

2. The Three Layers of "Reasoning Governance"

Interpretability must be treated as a feature set, not a diagnostic tool. We need to implement a tiered approach to "Reasoning Governance".

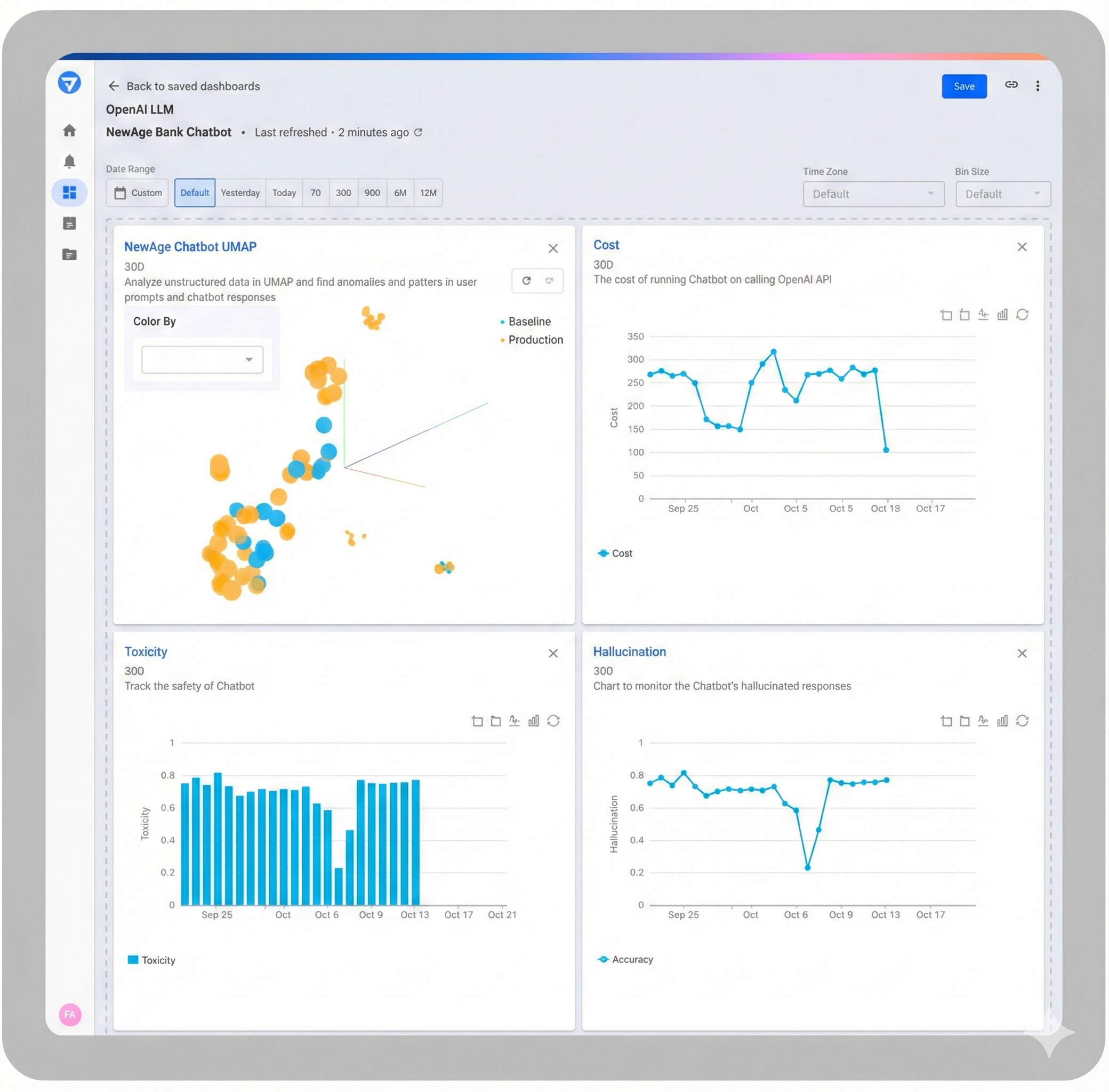

Fig 2: Explainable AI

Fig 2: Explainable AI

Level 1: Source Attribution (The "Receipt") In RAG (Retrieval-Augmented Generation) architectures, every output must include a citation. Grounding recommendations in ERP data (e.g., SAP/Oracle) has been shown to reduce error rates by 40%.

- Example: JD.com’s Supply Chain Planning Agents (SCPA) utilize modular task execution where every step is documented, creating a trace that allows planners to audit the specific data inputs driving the plan.

Level 2: Real-Time Drift Detection (The Early Warning) Supply chain models degrade when the world changes faster than the training data can keep up. Don't wait for a bad forecast. Use the Kolmogorov-Smirnov (KS) test to monitor input drift:

If seasonal demand shifts significantly (e.g., a sudden port strike), this metric alerts the system that its "mental map" is outdated before it makes a costly recommendation.

Level 3: Explanation Fidelity & Counterfactuals It is not enough for the model to be accurate; the explanation must be valid. We track Explanation Fidelity, requiring a >90% match between the model’s prediction logic and the post-hoc explanation.

- The "What-If" Dashboard: Embed tools like LIME or SHAP to allow users to toggle variables ("What if fuel costs rise 10%?"). Internal pilots show this interactivity boosts user adoption by 25%.

3. Solving the Bias Equation in Retail

In personalization and pricing, opaque models can replicate historical biases, leading to reputational damage. Governance requires mathematical constraints, not just policy documents. Use Demographic Parity metrics to audit dynamic pricing:

If the variance in premium offers between demographics exceeds 5%, the model is flagged for retraining. This moves fairness from a "nice-to-have" to a hard deployment gate.

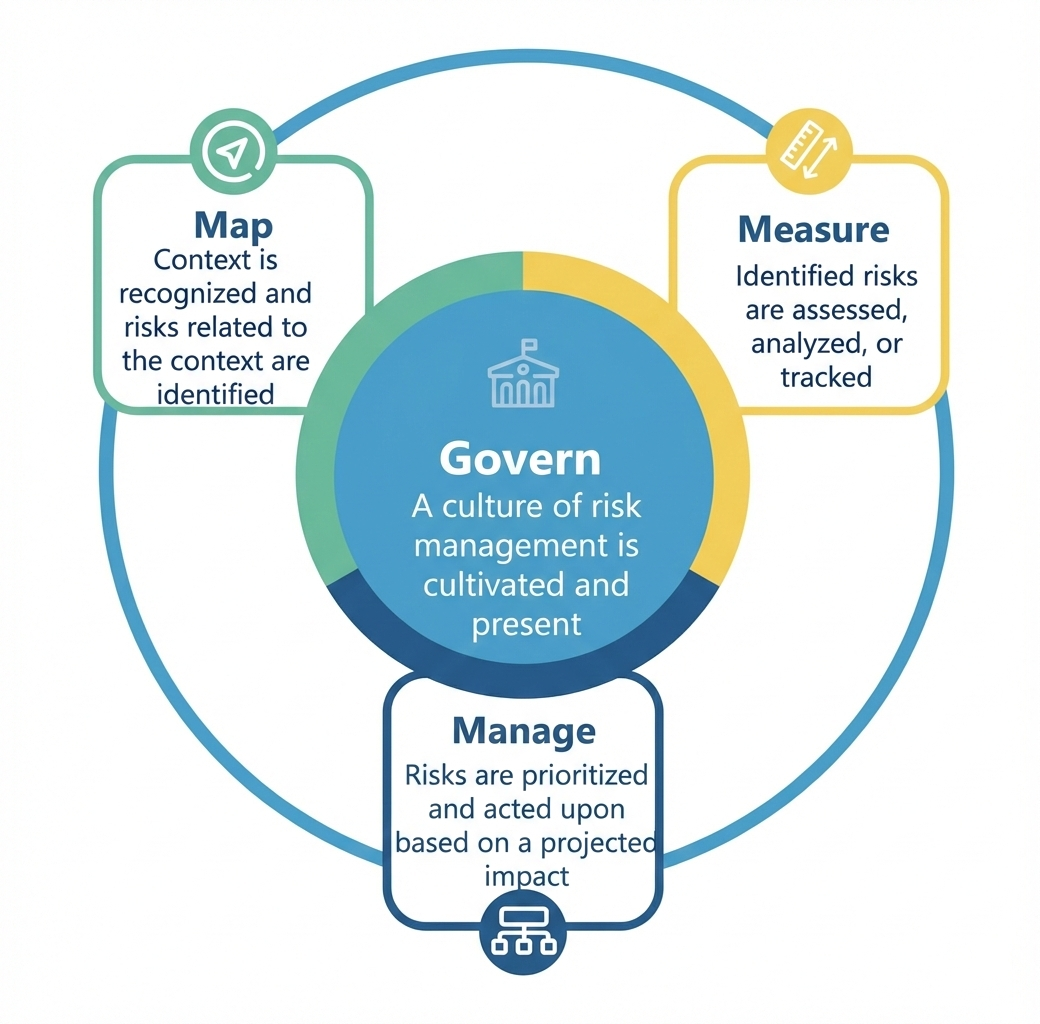

Fig 3: NIST Risk Management Framework

Fig 3: NIST Risk Management Framework

Summary: Governance is an Accelerator

Organizations that treat governance as a compliance checkbox create "organizational entropy"—where teams maintain parallel spreadsheets because they don't trust the AI.

The winners will be those who use frameworks like LEXMA to generate audience-specific explanations and deploy "Critic" agents to audit recommendations before they reach the user. By baking these metrics into the product lifecycle, enterprises don't just reduce risk; they accelerate decision velocity.

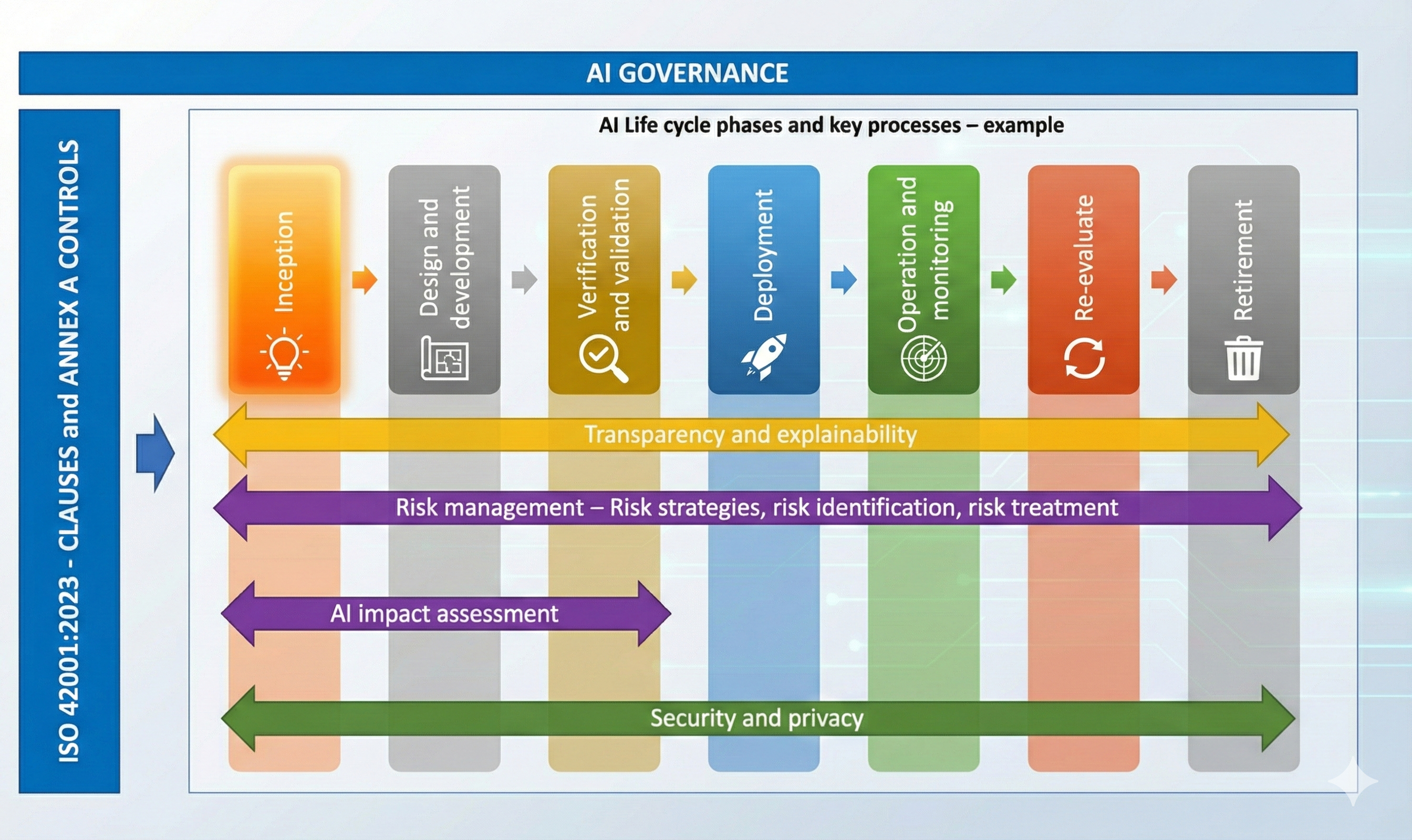

Fig 4: AI lifecycle management: ISO / IEC 42001:2023

Fig 4: AI lifecycle management: ISO / IEC 42001:2023

The future of Enterprise AI isn't just about intelligence. It's about accountability.

Sources:

- JD.com Supply Chain Planning Agent (SCPA) Case Study

- McKinsey (Bias in Supply Chain)

- EU AI Act (Regulatory penalties)

- LEXMA Framework (Audience-specific explainability)

- Internal Pilot Metrics (Adoption uplift & Error reduction)